Optimizing Cod3x Agents

Article three in the Cod3x Trading series

This article is part three in my series of exploring the Cod3x ecosystem and the capabilities of its AI agent platform.

If you haven’t read the first two articles in the series on setting up your agent with an advanced “Trading Strategy” and learning to pair trade with it, I suggest you give that a read first.

After setting up your agent and learning the basics of pair trading, you will be ready to explore this article, where we learn how to optimize our agent to get the most out of it while reducing cost (credit usage).

Why you should optimize your Agent

Most users will ask why should I optimize my agent? What is the need given we are using AI that understands large amounts of data and text? Shouldn’t the agent already be optimized out of the box?

The answer could be yes, the agent is already optimal. But as we use our agent more, there will be learnings that we can apply to improve our agent to reduce the amount of text it is “reading” or “outputting” which costs “credits”.

We can also optimize our agent to a degree to save money on “credits” once we’ve learned how the system operates and new tooling becomes available from Cod3x to reduce cost.

What are credits

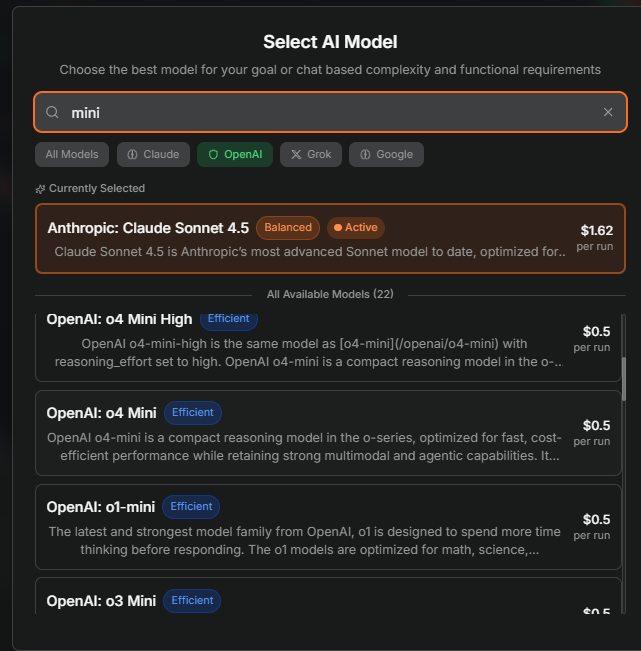

“Credits” on Cod3x translate directly to the token cost from Claude, OpenAI, or similar platforms at a markup.

Each character in a message or prompt translates directly to a “token” in AI terms, or what Cod3x calls “credit’s”.

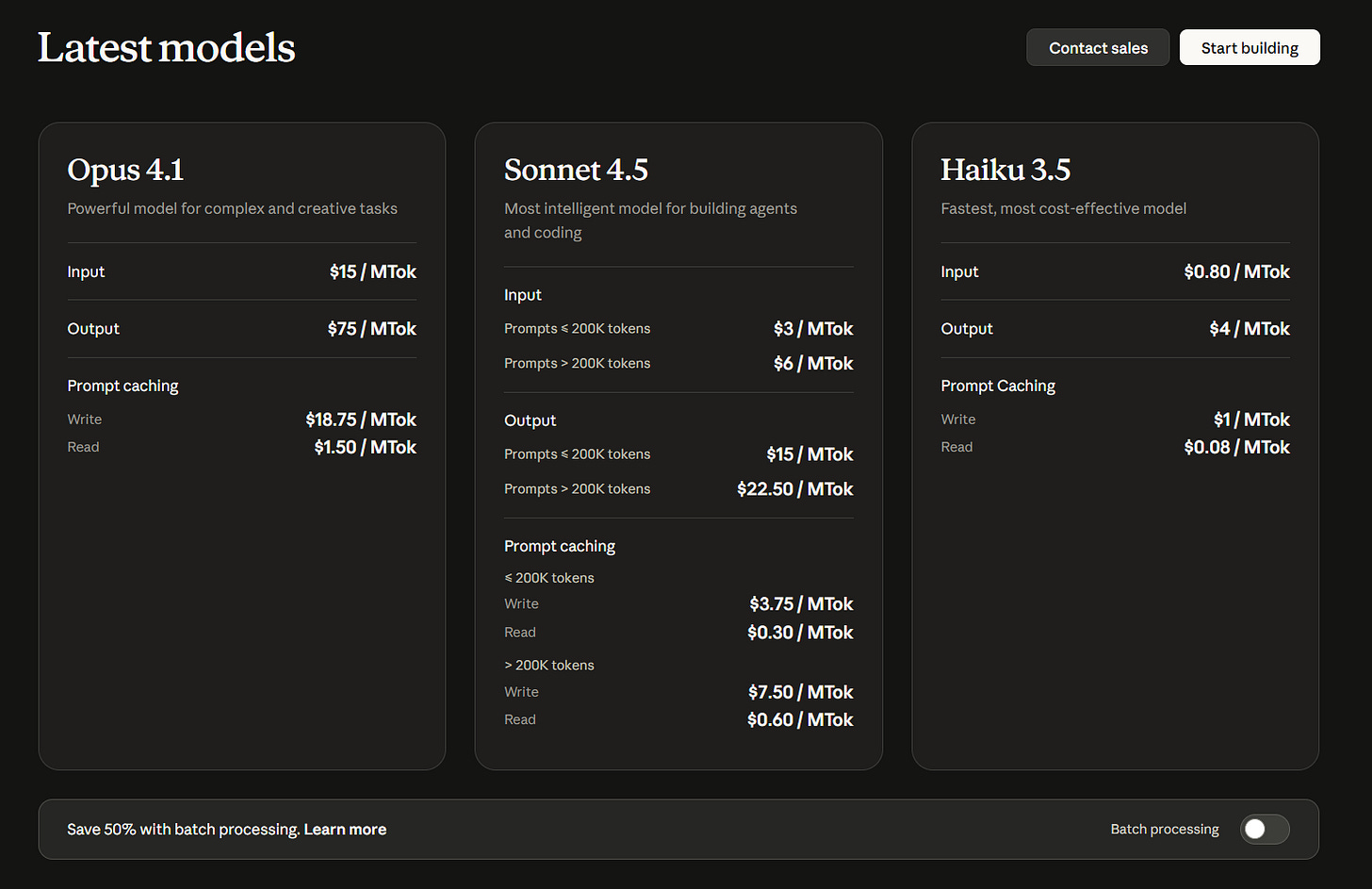

We can see in the image below that as your context increases from adding more text characters, so does the cost of your reply or input, given each character in a chat message translates directly to a token.

Models that cost more to train, or use more reasoning can cost more to run on a server, and therefore end up costing more per credit used.

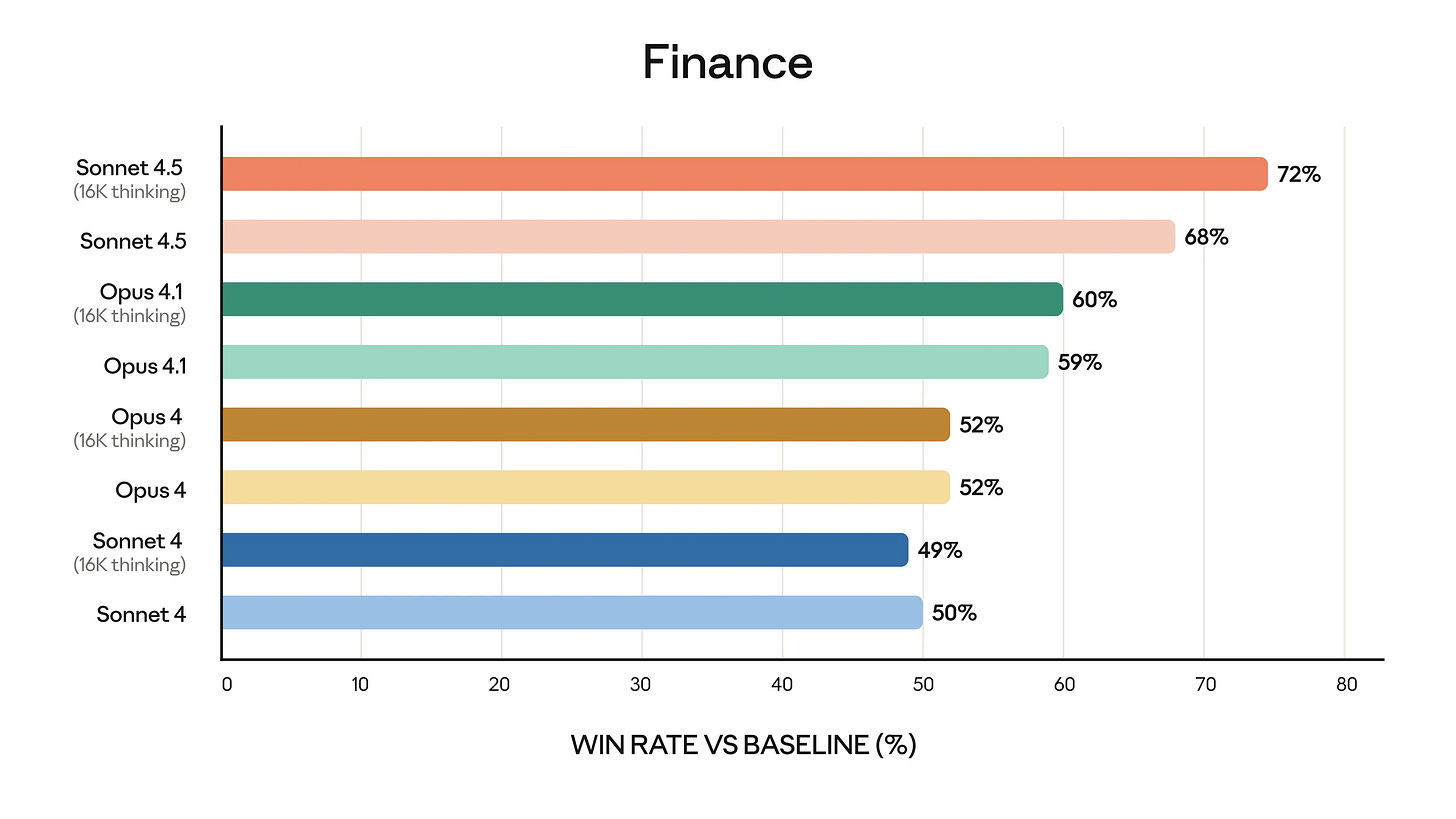

More expensive models like Claude Opus 4.1 can perform “reasoning” and “research” doing deep recursive thought, or reach out to sources to verify their answer is accurate for the prompt given by the user.

When these models begin their “deep thinking” they can use more tokens to process their thoughts and branch out their possible answers.

In this example above, you can see why Claude Opus 4.1 with its “deep thinking” ability costs so much more on Cod3x compared to something like Sonnet 4.5 which only performs some “regular thinking”.

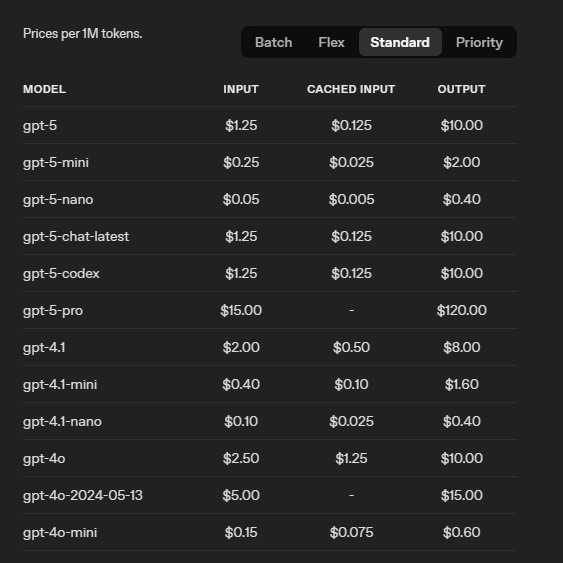

Looking at the pricing for OpenAI, we can now understand why it’s so cheap to run most interactions on 4o mini, which performs no thinking. The cost per million tokens is 20 times less when directly using the model through a developer API.

These prices directly translate to Cod3x’s credits with a markup which can be seen when choosing the model you chat with. The model we will be optimizing for in this case is for Claude Sonnet 4.5 given it has the best use of Cod3x’s tooling without error, and the best financial analysis when compared to similar models.

Now that we understand how tokens are used and what they cost, we can begin optimizing our prompts and Trading Strategy to reduce token count and therefore credit cost.

Optimizing for credit usage

Since credits can be expensive on Cod3x depending on the model, we will optimize our agent to reduce credit expenditure while still providing the most optimal output with our current “Trading Strategy” and daily “Chat Prompt” we developed in articles 1 and 2.